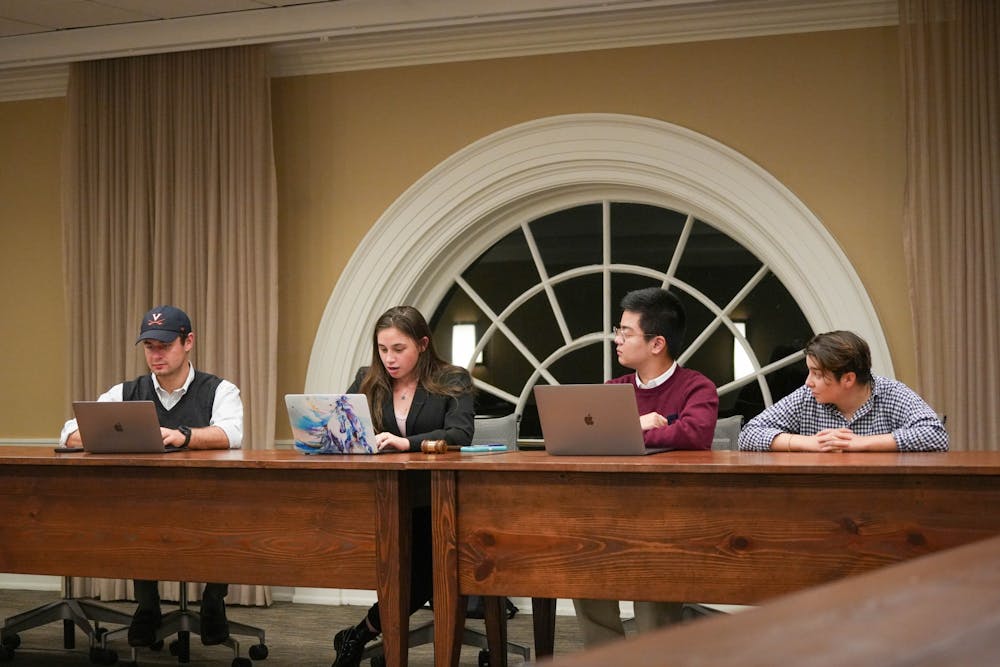

Members of the Honor Committee discussed the possible impacts of AI software like ChatGPT on academic integrity in the Committee's first meeting of the new semester Sunday. The meeting fell two members short of quorum as only 13 out of 22 members were present, meaning the Committee could not vote on constitutional matters and bylaws.

Hamza Aziz, chair for investigations and third-year College student, discussed ways to possibly combat the use of artificial intelligence like ChatGPT, suggesting a town hall and bringing in experts to discuss academic integrity.

ChatGPT is an artificial intelligence program that functions like a search engine. The software has recently exploded in popularity, gaining over 1 million users in just one week following its Nov. 30 launch date. More recently, the program has been used to write academic papers and complete academic assignments — usages that would violate the University’s Honor Code.

The Committee pulled insight on the issue from Evan Pivonka, faculty advisor to the Committee and Politics professor, who said the University should define expectations surrounding artificial intelligence on assignments.

“It's going to require really clear guidance from professors on what is the acceptable use of these new things and what isn't,” Pivonka said. “I think it's going to take a lot of back and forth working with the Provost and faculty.”

Currently, there is not any definitive way to definitively detect AI generated answers or papers — some programs can give a confidence percentage of how likely AI completed the assignment or paper. Graduate Engineering student Rep. Kevin Lin discussed detection programs like these and their implications for Honor cases.

According to the Committee, the main concern surrounding generative AI softwares like ChatGPT is that Honor will have no way to prove that an assignment or exam used AI beyond reasonable doubt.

“[The programs] tell you that it's 99.9 percent confident or 98 percent confident — the problem is whether or not we're willing to find somebody guilty based on 99 percent confidence rate,” Lin said. “[The only way] is to create another AI to discriminate between the real and the generated, and that takes a very long time.”

Gabrielle Bray, chair of the Committee and fourth-year College student, discussed the possibility of developing a statement that highlights the uncertainty behind AI software. Bray said that it is acceptable for the Committee to not know what evidence of cheating with AI software will look like until a case is brought forward.

Fourth-year College student Rep. Sullivan McDowell discussed the urgency of a solution to AI software and the possible impact it could have on Honor and student responsibility.

“What I don't want to see happen here is us having no way to decide whether or not something has been written by artificial intelligence,” McDowell said. “We [could] just have students walking around who regularly submit papers that they have not written.”

Outside of discussions about AI, the Committee also had school and executive updates. Honor Support Officers are now having monthly meetings instead of weekly meetings. For cases, there are currently eight active investigations that are ongoing.

The Committee entered a brief closed session at 7:40 p.m. to discuss case updates. The Committee returned from closed session at 7:50 p.m. The Committee dedicated the remainder of the meeting to event planning and drafting a statement to the University community regarding generative AI. The Committee’s planned Constitutional Convention to solicit input to draft a multi-sanction system was pushed back to January last semester, and the group did not provide any updates on the event’s timing.

The next Honor Committee meeting will be held Sunday at 7 p.m. in the Trial Room of Newcomb Hall.